- Home

- About

- Contact

- Fp64 vs fp32 vs fp16

- Notarized bill of sale boat colorado

- Is purchasing mac virus protection worth it

- Hero of the kingdom 3 stolen chest

- Temple run 2 lost jungle game

- Mirror for samsung tv free mac

- Best mp3 cutter joiner free download full version

- Tetragon vs ripple

- Astute graphics plugins bundle for adobe illustrator 2016

- How do i add diablo 3 pc game to battle-net account

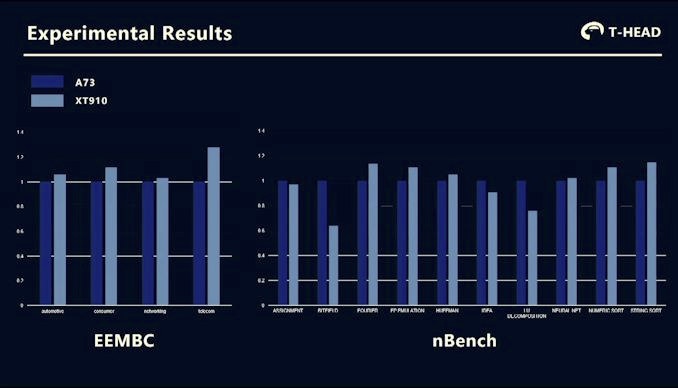

In this section, we summarize everything that you must know to accelerate deep learning workloads with TF32 Tensor Cores. A100 speedups over V100 FP32 for PyTorch, TensorFlow or MXNet using NGC containers 20.08 and 20.11 with models from NVIDIA Deep Learning Examples. Thus, TF32 is a great starting point for models trained in FP32 on Volta or other processors, while mixed-precision training is the option to maximize training speed on A100.įigure 5. Furthermore, switching to mixed precision with FP16 gives a further speedup of up to ~2x, as 16-bit Tensor Cores are 2x faster than TF32 mode and memory traffic is reduced by accessing half the bytes. However, speedups observed for networks in practice vary, since all memory accesses remain FP32 and TF32 mode doesn’t affect layers that are not convolutions or matrix multiplies.įigure 5 shows that speedups of 2-6x are observed in practice for single-precision training of various workloads when moving from V100 to A100. Training speedupsĪs shown earlier, TF32 math mode, the default for single-precision DL training on the Ampere generation of GPUs, achieves the same accuracy as FP32 training, requires no changes to hyperparameters for training scripts, and provides an out-of-the-box 10X faster “tensor math” (convolutions and matrix multiplies) than single-precision math on Volta GPUs. From left to right: ResNet50, Mask R-CNN, Vaswani Transformer, Transformer-XL. Accuracy values throughout training in FP32 (black) and TF32 (green) for various AI workloads.

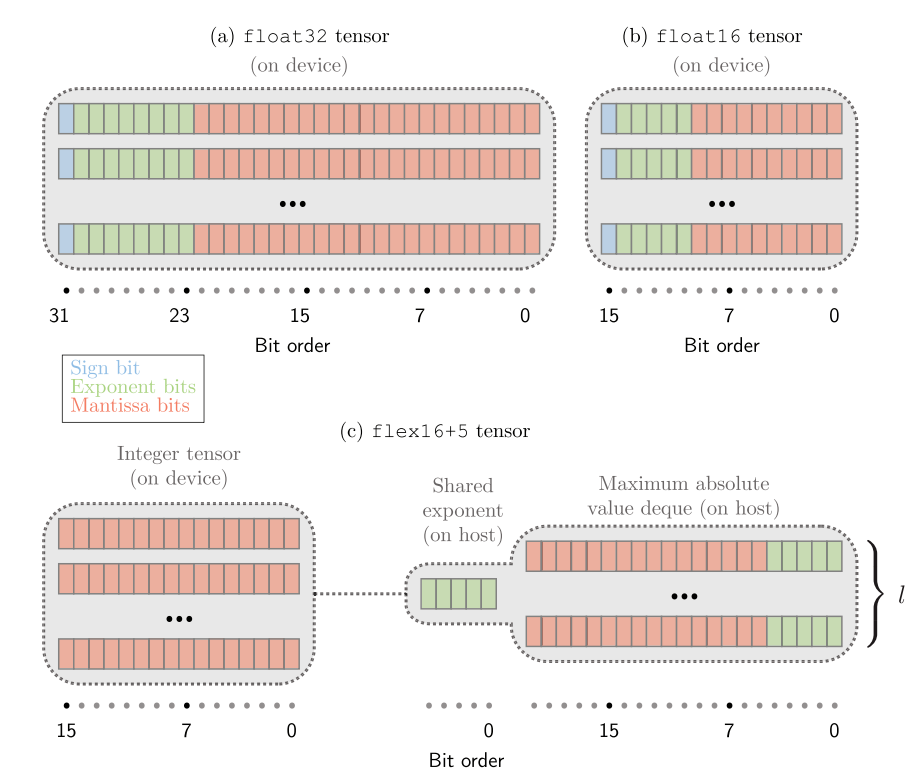

Dot product computation, which forms the building block for both matrix multiplies and convolutions, rounds FP32 inputs to TF32, computes the products without loss of precision, then accumulates those products into an FP32 output (Figure 1).įigure 4. TF32 is a new compute mode added to Tensor Cores in the Ampere generation of GPU architecture. It’s also worth pointing out that for single-precision training, the A100 delivers 10x higher math throughput than the previous generation training GPU, V100. Table 1 shows the math throughput of A100 Tensor Cores, compared to FP32 CUDA cores.

#FP64 VS FP32 VS FP16 CODE#

Mixed-precision training with a native 16-bit format (FP16/BF16) is still the fastest option, requiring just a few lines of code in model scripts.

It brings Tensor Core acceleration to single-precision DL workloads, without needing any changes to model scripts.

TF32 mode is the default option for AI training with 32-bit variables on Ampere GPU architecture. NVIDIA Ampere GPU architecture introduced the third generation of Tensor Cores, with the new TensorFloat32 (TF32) mode for accelerating FP32 convolutions and matrix multiplications.